When My Land Title Took 6 Months: What $VANRY 's AI-Optimized Consensus Means for Real-World Assets Beyond DeFi

I still remember the smell of old paper and dust the day I went to the sub-registrar’s office to verify a small piece of land my family wanted to purchase. The ceiling fan was spinning but barely moving the heat. Files were stacked in uneven towers. A clerk flipped through a thick register with fingers stained by ink. I was given a token number 47 even though only 19 people were in the room. “System slow,” someone said.

The seller had one version of the title. The local broker had another photocopy. The government portal showed “Record Not Updated.” A bank officer later told me they needed physical verification before approving a loan. It took six months for a document that supposedly represents ownership to feel real. 🏠

That day, I wasn’t thinking about blockchain. I was thinking about something more basic: why does ownership depend on paperwork scattered across institutions that don’t trust each other?

The Structural Friction We Ignore

We talk about tokenization like it’s a technical upgrade. But the real problem is older and uglier. Our asset systems — land, invoices, art, warehouse receipts, carbon credits — are built on fragmented ledgers. Each institution keeps its own “truth.” Courts interpret one version. Banks rely on another. Regulators audit a third.

It’s not corruption alone. It’s coordination failure.

Imagine ownership not as a document but as a conversation between institutions that never ends. Every time an asset changes hands, that conversation has to restart. That’s where delays, disputes, and rent-seeking enter.

Tokenization promises to compress this conversation into a single shared record. But here’s the uncomfortable reality: most blockchains were not designed for real-world semantic complexity.

They record transactions well. They do not understand context.

If you tokenize land on a generic chain, the chain knows that Token #453 moved from A to B. It does not know:

Whether the municipal zoning changed.

Whether a court placed a lien.

Whether environmental clearance was revoked.

Whether a bank holds collateral rights.

Real-world assets are not just ownership transfers. They are layered legal narratives.

Why the System Breaks

There are three structural reasons real-world asset tokenization keeps stalling beyond pilot projects:

1. Institutional Fragmentation

Land records sit with municipal authorities. Mortgage records with banks. Tax compliance with revenue departments. Securities regulation with bodies like the SEC or SEBI. These systems were not built to interoperate.

2. Incentive Misalignment

Bureaucracies are rewarded for procedural compliance, not speed. Banks are rewarded for risk minimization, not innovation. Regulators prioritize systemic stability over technological experimentation.

3. Data Without Meaning

Even if data is digitized, it is not semantically structured. A PDF of a contract is digital, but it is not machine-understandable.

This is where many blockchain narratives oversimplify.

Ethereum excels at programmable settlement but struggles with high gas costs and scalability for heavy, context-rich data layers.

Solana offers high throughput, but speed alone does not solve semantic interpretation.

Both are powerful coordination engines. Neither natively addresses the question: How do we embed meaning into consensus?

Reframing the Problem

Think of real-world assets as living organisms. Ownership is not static. It evolves through regulation, litigation, taxation, environmental review, and social claims.

Traditional systems treat these changes as separate files. Blockchains treat them as isolated transactions.

What if consensus didn’t just agree on “what happened,” but also optimized around the relevance and interpretation of data?

That is a different category of infrastructure.

Where #vanar Enters the Frame

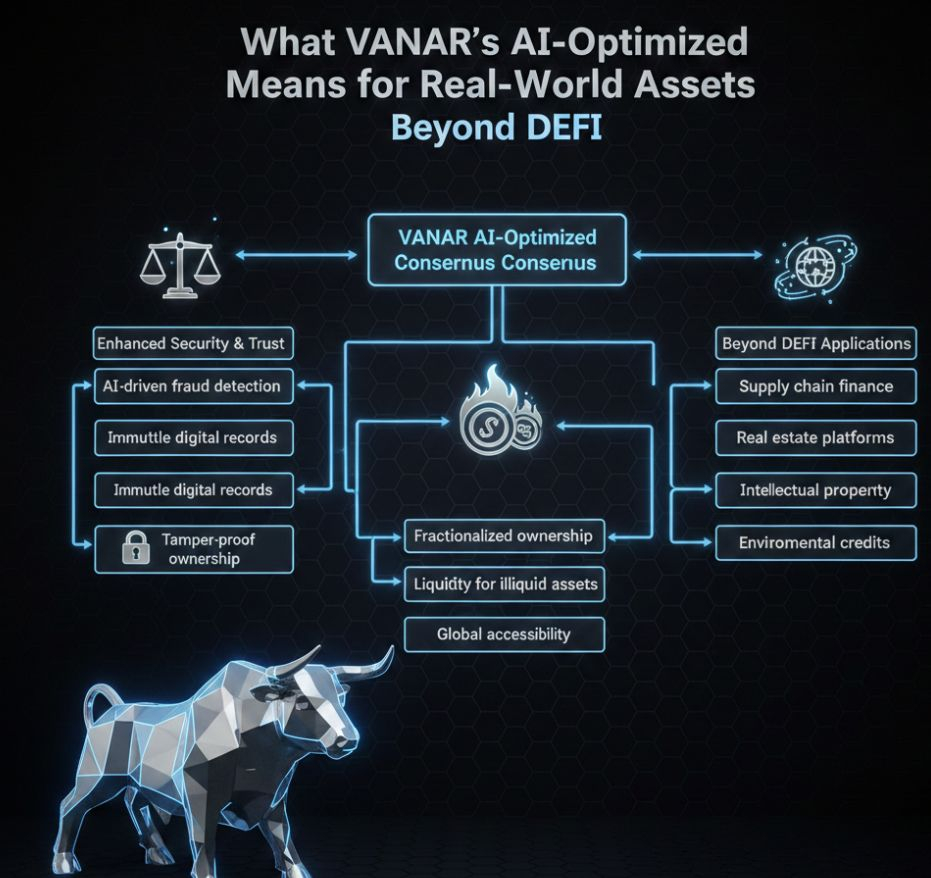

@Vanarchain introduces two architectural ideas that matter here: AI-optimized consensus and a semantic data layer.

This is not marketing language. It is structural design.

AI-optimized consensus implies that validation and network agreement can be tuned for efficiency and adaptive decision-making, rather than static rule enforcement alone. That matters when tokenized assets depend on dynamic real-world inputs.

The semantic data layer, more importantly, attempts to organize information not just as storage, but as contextual relationships. Instead of merely anchoring hashes of documents, the system aims to structure meaning in a way machines can query.

If tokenization is to extend beyond DeFi into land, commodities, supply chains, or infrastructure bonds, the chain must handle layered metadata intelligently.

Otherwise, you are simply digitizing chaos.

Implications Beyond DeFi

Consider infrastructure bonds issued by municipalities. Today, investors rely on rating agencies, PDF disclosures, and fragmented reporting. If tokenized on a semantic layer:

Regulatory updates could attach directly to the asset token.

Environmental compliance could update as machine-readable events. Payment risk signals could be analyzed algorithmically.

Example:

A solar farm bond is tokenized. A regulatory body changes subsidy policy. On a traditional system, investors read about it weeks later. On a semantic-aware chain, the subsidy change is linked to the bond’s data structure in near real time, altering risk models automatically. 🌱

This is not about speculation. It is about reducing information asymmetry.

Risks and Tensions

However, embedding AI into consensus introduces its own tensions.

1. Governance Risk

Who defines the AI optimization criteria? If consensus adapts, who audits its behavior?

2. Regulatory Scrutiny

Real-world asset tokenization touches securities law, property law, and cross-border regulation. AI layers may complicate accountability.

3. Data Integrity Dependency

Semantic structure is only as strong as its inputs. Garbage in, structured garbage out.

There is also the philosophical question: Should machines interpret legal nuance?

Courts evolve precedent through human judgment. If semantic layers attempt to codify contextual relationships, they risk oversimplifying ambiguity that law intentionally preserves.

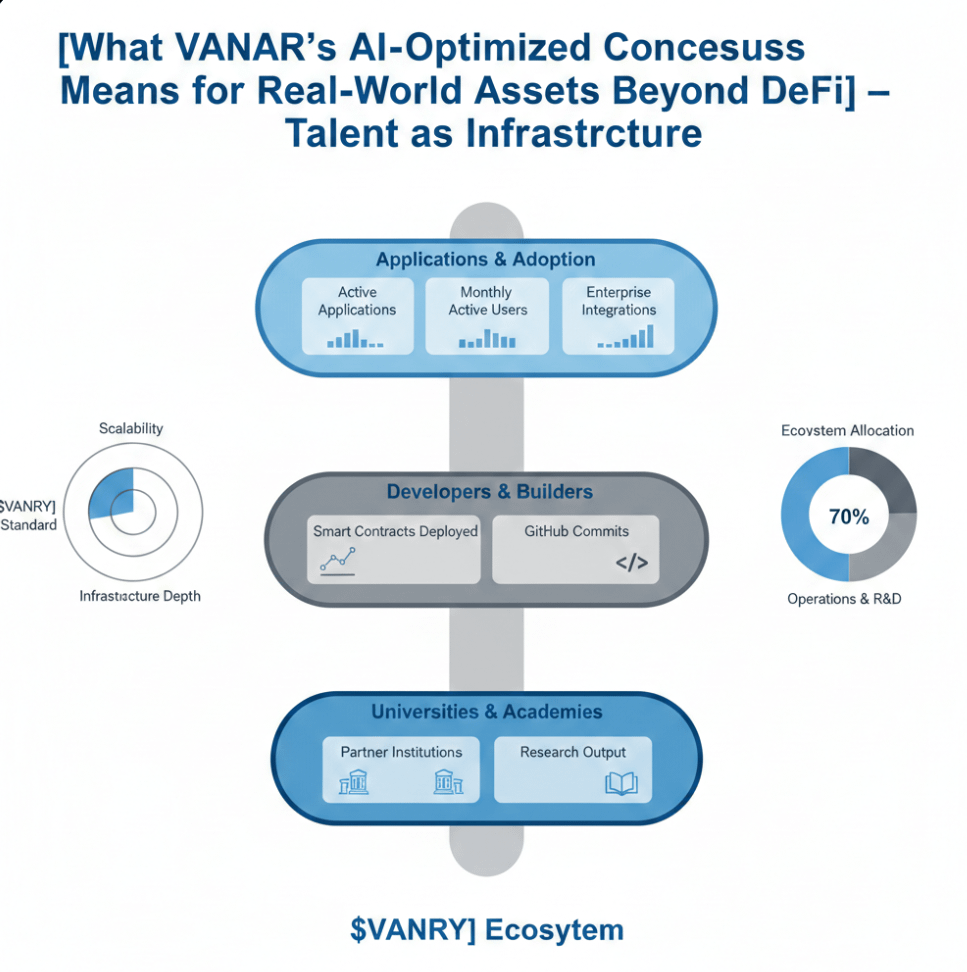

Visual Framework like Tokenized Asset Lifecycle Timeline

Proposed Timeline Visual: “From Physical Asset to AI-Optimized Token”

1. Physical Asset (Land / Bond / Commodity)

2. Legal Documentation Digitization

3. Semantic Structuring of Rights & Obligations

4. AI-Optimized Consensus Validation

5. Continuous Regulatory & Environmental Updates

6. Secondary Market Liquidity

This timeline matters because it shows tokenization as a process, not a minting event.

Most current projects stop at step 2 or 3. Token Mechanics Without Hype

For $VANRY ’s token economics to matter in this architecture, utility must be tied to data validation, semantic processing, and governance participation — not just transaction fees.

If token demand depends on real-world asset onboarding and semantic queries, it aligns incentives toward adoption rather than speculation.

But that alignment is fragile. If usage fails to scale beyond niche pilots, the token becomes detached from the architecture’s promise.

The Broader Implication

When I think back to that land office, what frustrated me wasn’t the delay. It was the opacity. I could not see the full narrative of the asset in one place. Every institution guarded its fragment.

If AI-optimized consensus and semantic data layers succeed, they do not merely tokenize assets. They compress institutional memory into a shared computational structure. 📚

That changes how capital markets perceive risk. It changes how collateral is assessed. It changes how governments issue and monitor obligations.

But it also concentrates interpretive power into protocol design.

If machines begin to mediate not just transactions but meaning — who ultimately controls the narrative of ownership?