@Vanarchain I was back at my desk at 2:03 p.m. after a client call, the kind where everyone nods at next steps and then immediately scatters. My notebook was open to a page of half-finished action items. I tried an “agent” to clean it up and watched it lose the thread halfway through. How far am I supposed to trust this?

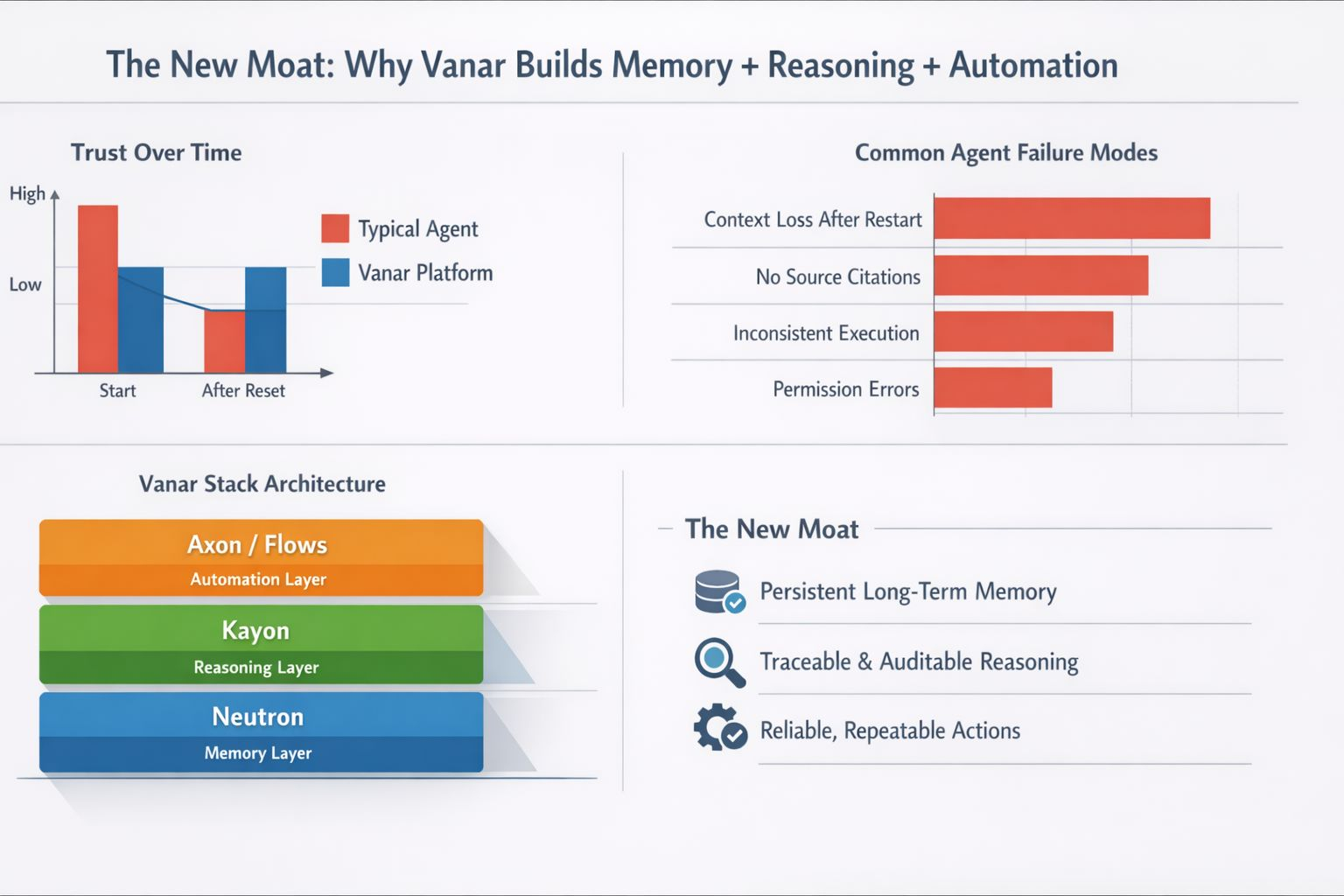

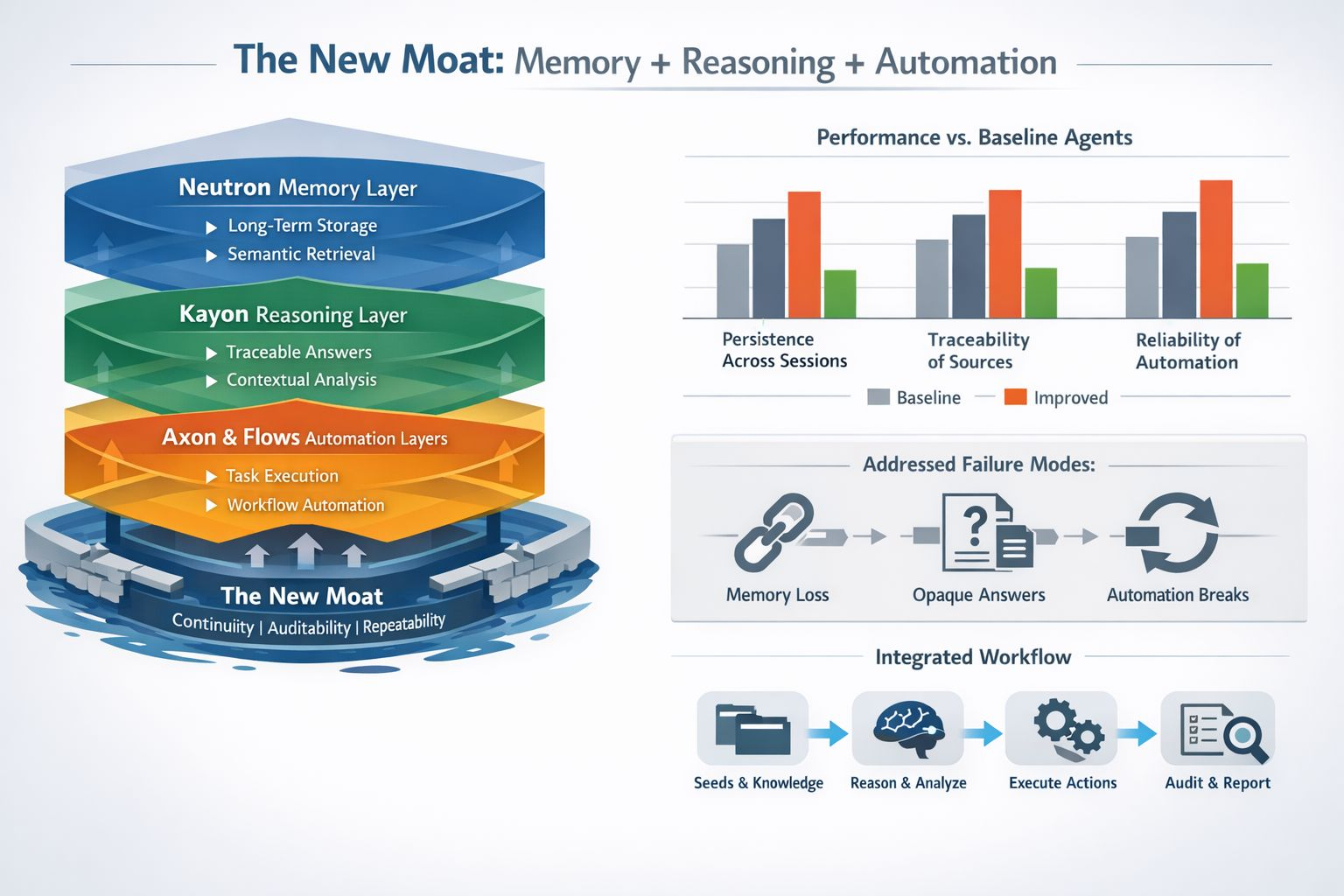

I keep coming back to a phrase I’ve started using as shorthand: The New Moat: Why Vanar Builds Memory + Reasoning + Automation. The hype around assistants has turned into a basic demand. People want tools that can carry work across days, not just answer a prompt. That’s why long-term memory is getting serious attention, including the broader industry move to make memory a controllable, persistent part of the product rather than a temporary session feature.

But memory isn’t enough. I care because the moment it fails, I’m the one cleaning up. A system can remember plenty and still waste my time if it can’t decide what matters, or if it can’t show where an answer came from. When I think about a moat now, I don’t think about who has the flashiest model. I think about who can hold state over time, reason against it in a way I can audit, and then turn decisions into repeatable actions without breaking when the environment changes.

Vanar’s stack is interesting because it tries to separate those jobs instead of blending them into one chat window. In Vanar’s documentation, Neutron is framed as a knowledge layer that turns scattered material—emails, documents, images—into small units called Seeds. Those Seeds are stored offchain by default for speed, with an option to anchor encrypted metadata onchain when provenance or audit trails matter. The point is continuity with accountability, not just storage.

That separation matters when you look at how most agents “remember” today. In many setups I’ve seen, memory is essentially plain text files living inside an agent workspace. That’s a sensible starting point, but it’s fragile. Switch machines, redeploy, or even just reopen a task a week later and the agent can behave like it’s meeting you for the first time. Vanar positions Neutron as a persistent memory layer for agents, with semantic retrieval and multimodal indexing meant to pull relevant context across sessions. If it works as designed, it targets the most common failure mode I see: the agent restarts, and the project resets to zero.

Reasoning is the second layer, and Vanar ties that to Kayon. Kayon is described as the interface that connects to common work tools like email and cloud storage, indexes content into Neutron, and answers questions with traceable references back to the originals. That sounds like a feature until you’ve watched a team argue about what an assistant “used” to reach a conclusion. In real work, defensible answers matter. If I can move from a response to the underlying source material, I can trust the workflow without blindly trusting the model.

Automation is the moment an assistant moves from talking to acting, and that’s where trust gets tested. I don’t want an agent that’s ambitious. I want one that’s dependable—same handful of weekly jobs, done quietly, no drama. Kayon’s docs talk about saved queries, scheduled reports, and outputs that preserve a trail back to sources. Vanar also describes Axon as an execution and coordination layer under development, and Flows as the layer intended to package repeatable agent workflows into usable products. I’m cautious here, because “execution” is where permissions, error handling, and guardrails decide whether the system helps or harms.

If Vanar’s bet holds, the moat isn’t a secret model or a clever prompt library. It’s the ability to build a private second brain that stays portable and verifiable, then connect it to routines people already run. I’ll still judge it the boring way—retrieval quality, access controls, and whether it can admit uncertainty. But the direction matches what I actually need: remember what matters, show your work, and handle the repeatable parts so I don’t have to.