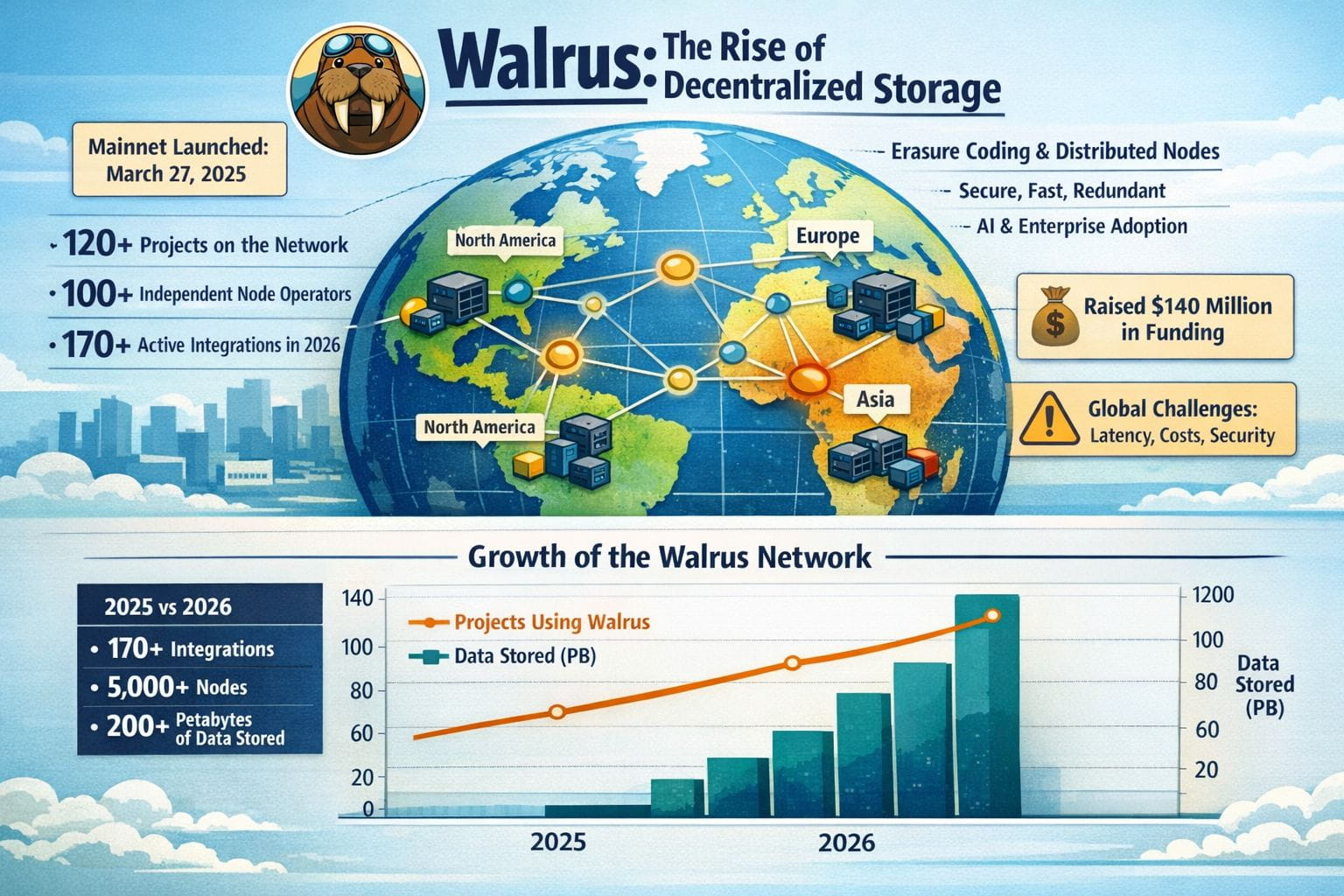

When Walrus launched its mainnet back on March 27 of early 2025, many viewed it as yet another decentralized storage will be fixed story. A year later, the picture looks a bit more serious. More than 120 projects are using the network, it has over 100 independent node operators providing support and several real world platforms store their production data on it. But building a global storage network is among the most complex tasks in crypto, and Walrus illustrates both the pace at which progress can be made today and the limits beyond which one cannot go.

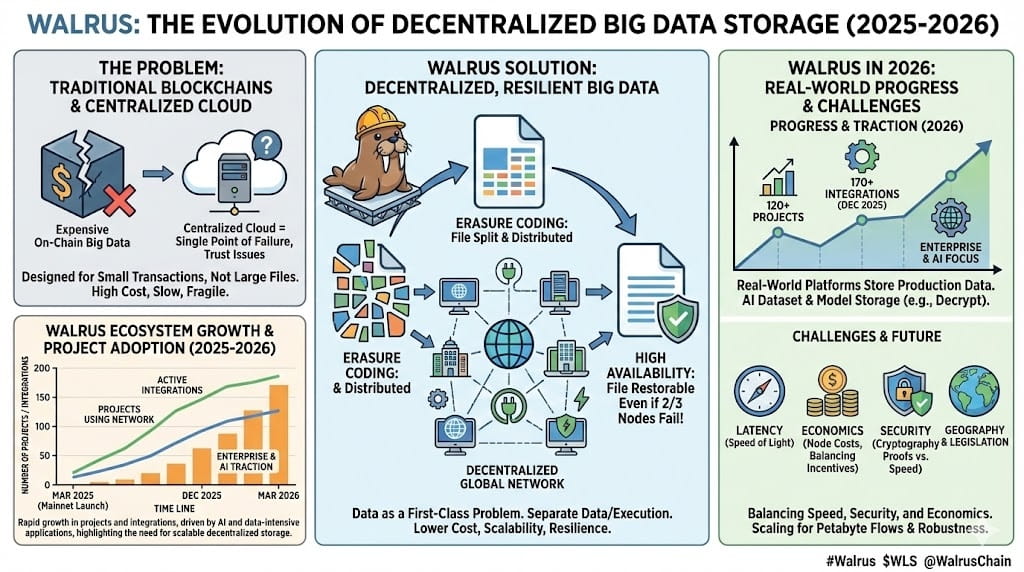

Ultimately, the Walrus team is trying to solve a very basic and thorny problem: how do you store large files in a decentralized way without making it slow, expensive, or fragile? Traditional blockchains simply aren't built for this. On-chain storing of big files is very costly and inefficient; hence, most applications are brought into reliance on centralized cloud providers. That brings back a single point of failure and the very trust issues crypto was supposed to remove in the first place.

Walrus achieves this through erasure coding of files in many small pieces. To put it simply, a file or a set of files is segmented or split and split among many computers or nodes in a decentralized network. You don't need all of them to restore your file; just a few will do. It means your files will be available even if many of those nodes go down or offline. In fact, it will be available even if up to two-thirds of their nodes go down or offline!

But creating a global storage network is about a lot more than just smart mathematics. Geography, network access times, hardware quality, legislation, and even human nature all count. A node in Singapore, a node in Frankfurt, and a node in Sao Paolo are all very different beasts. Concerns of network access times, electricity supply failure, and legislation all need to be taken into account. This is where theory meets reality.

Moving into 2025, Walrus increased node presence across various continents. Not only that, as of December, Walrus boasted more than 170 active integrations, along with increasing enterprise traction, focused predominantly in AI applications as well as other data-intensive applications. Firstly, one reason for this has to do with the increasing number of AI tools that are now around; processing these multiple models and storing the data require larger storage capacities, which AWS, as a centralised platform, has a strong hold over, but, as users also promote a significant trade-off between centralised services and a higher price point.

The problem, however, should be obvious: the issue of latencies. Accessing data that is spread all over the globe faces numerous challenges. While Walrus has several ways of ensuring that latencies are reduced via smart routing and redundancy, it still cannot escape some of the basic limitations of reality. The speed of light has still remained the same.

However, another challenge is that of economics. Running a reliable storage node is not free. There is money to spend on nodes, bandwidth, electricity, and so on. Walrus recently raised $140 million in March 2025 from major investors to build up infrastructure and incentives, and this is good. Balancing this is a delicate act, certainly within a system in which a few providers enjoy the benefits of massive scale.

Another layer of complexity is introduced with security. With a decentralized approach to storing files, it is important to verify at all times that each node is storing what it says it is storing. This is done with cryptography proofs in Walrus. However, it is important not to make this verification process bog this network down or allow it to be exploited. This struggle between security and usability or speed is something getting progressively more complicated with each growing network.

The interesting thing about Walrus in 2026 is that it is now rather starting to face those challenges in a real-world environment, rather than just a testing environment. With platforms such as Decrypt, media storage occurs with Walrus, and also projects involving artificial intelligence make use of Walrus, specifically for dataset and model storage.

Why is this trend happening now? There are a few reasons for this. Storage is quickly becoming the quiet bottleneck in crypto. Scaling blockchains, roll-ups, even gaming platforms or AI models: they all generate a tremendous amount of data. And decentralized storage just wasn't on much of anyone’s mind as a consideration in 2024. By the end of 2025, though, it had very quickly become apparent just how big of a problem this would be for the larger crypto ecosystem if storage weren't to improve. Walrus fits right in the middle of this issue.

Furthermore, if we consider the market dynamics, another interesting factor is the interest shown by the entities involved in the market. Regulated finance is a market component that cares a great deal about data integrity. There is no way the data can be left to entities subject to potential disappearance or alteration. Obviously, decentralized storage has great potential if well harnessed. Thus, the interest shown in health data analytics, as well as prediction markets and enterprise analytics markets, began emerging across 2025.

From my observation of market cycles in cryptocurrencies, infrastructure projects typically seem uninteresting until they are suddenly impossible to ignore. I believe that the need for storages will be like that it will be uninteresting until it becomes impossible to ignore because if it fails, all other projects will be in jeopardy.

Walrus is still far from its completion. Scaling node involvement, enhancing worldwide execution, and minimizing expenses are continuous conflicts. Beated nevertheless, it is critical to recognize what Walrus and its equivalents have accomplished in less time. Hosting numerous completely disseminated websites and facilitating hundreds of uses demonstrates that disseminated stockpiling is advancing from abstraction towards fact.

The test will be seen in the use case though. Will the network be able to handle more and more petabyte-scale flows as an ongoing trend? Will the network's robustness last during moments of market volatility, regulatory upheavals, and geopolitical uncertainty? These are the kinds of questions that will pose the greatest challenges to worldwide storage networks, and no whitepaper will be able to pre-speculate on them satisfactorily.

What Walrus shows in the demonstrations he has given so far is that with the right design considerations and engineering trade-offs that actually make sense in the real world, we might be able to get the technology for decentralized storage to where we want it to be. It might never succeed to the extent of replacing traditional cloud-based systems entirely, but that's missing the whole point. A large enough slice of the market for secure and uncensored storage could be said to redefine the internet itself, particularly an internet that's very much being defined by information these days.