The market lately feels like a crowded hallway where everyone is trying to walk faster than everyone else. New numbers, new charts, new claims that settle the argument in one screenshot. I still catch myself looking at them. Then I go back to the same question that has survived every cycle for me. Did any of this make the human experience feel calmer, or did we just make uncertainty happen more quickly.

I did not rush to praise or dismiss FOGO when I first saw it framed as speed. I still cannot claim I have watched it through enough ugly incidents to call it proven. But there was one axis that kept pulling me back, because it is the axis that quietly decides whether a system feels usable under real load.

Where does the delay live, and who is forced to pay for it.

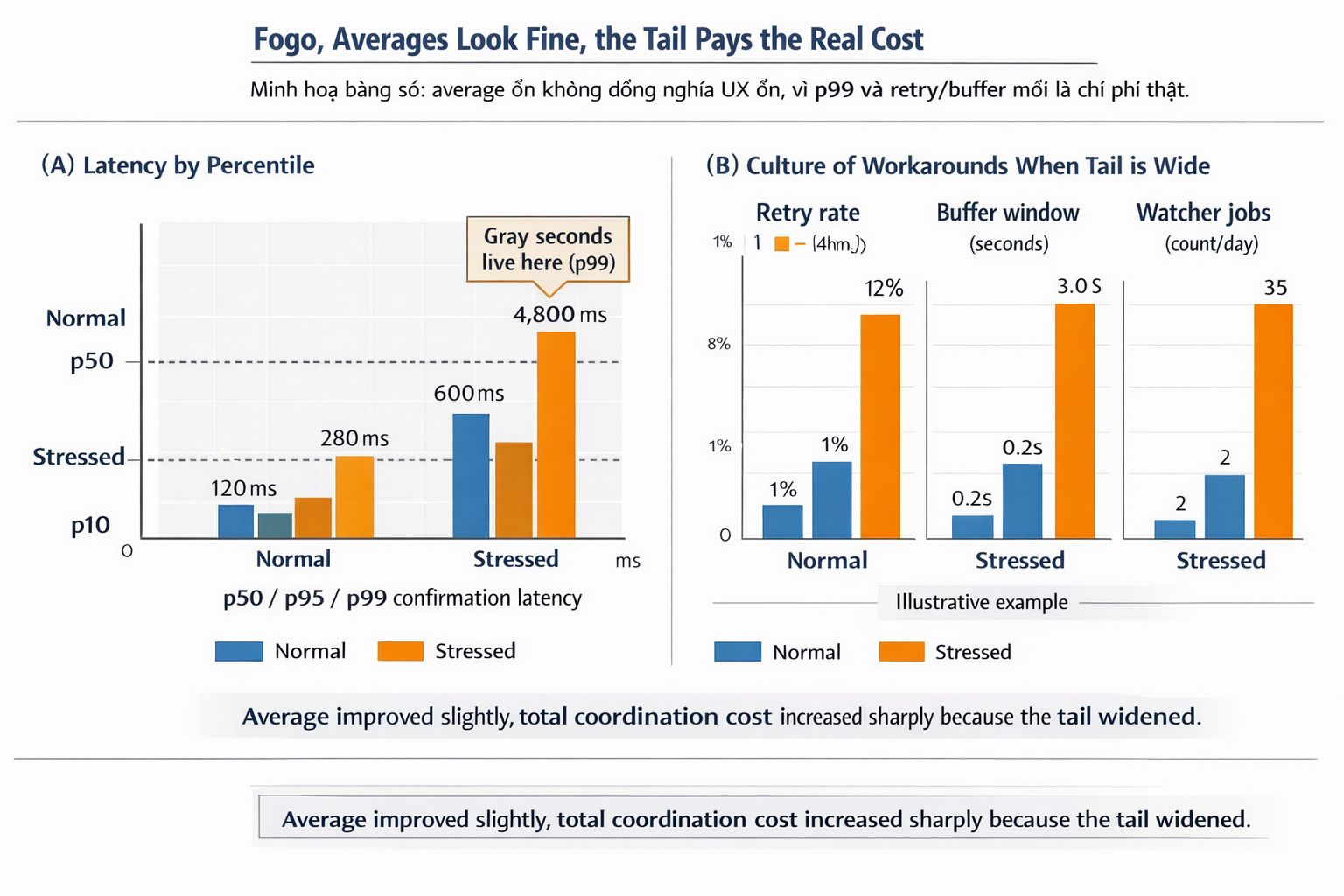

For a long time, I treated performance the way the market treats it, average throughput, average block time, average confirmation time. The problem is that users do not live in averages. Builders do not live in averages either. They live in the tail. They live in the few seconds where everything becomes ambiguous just long enough to force a decision.

Averages hide cost. Tails create habits.

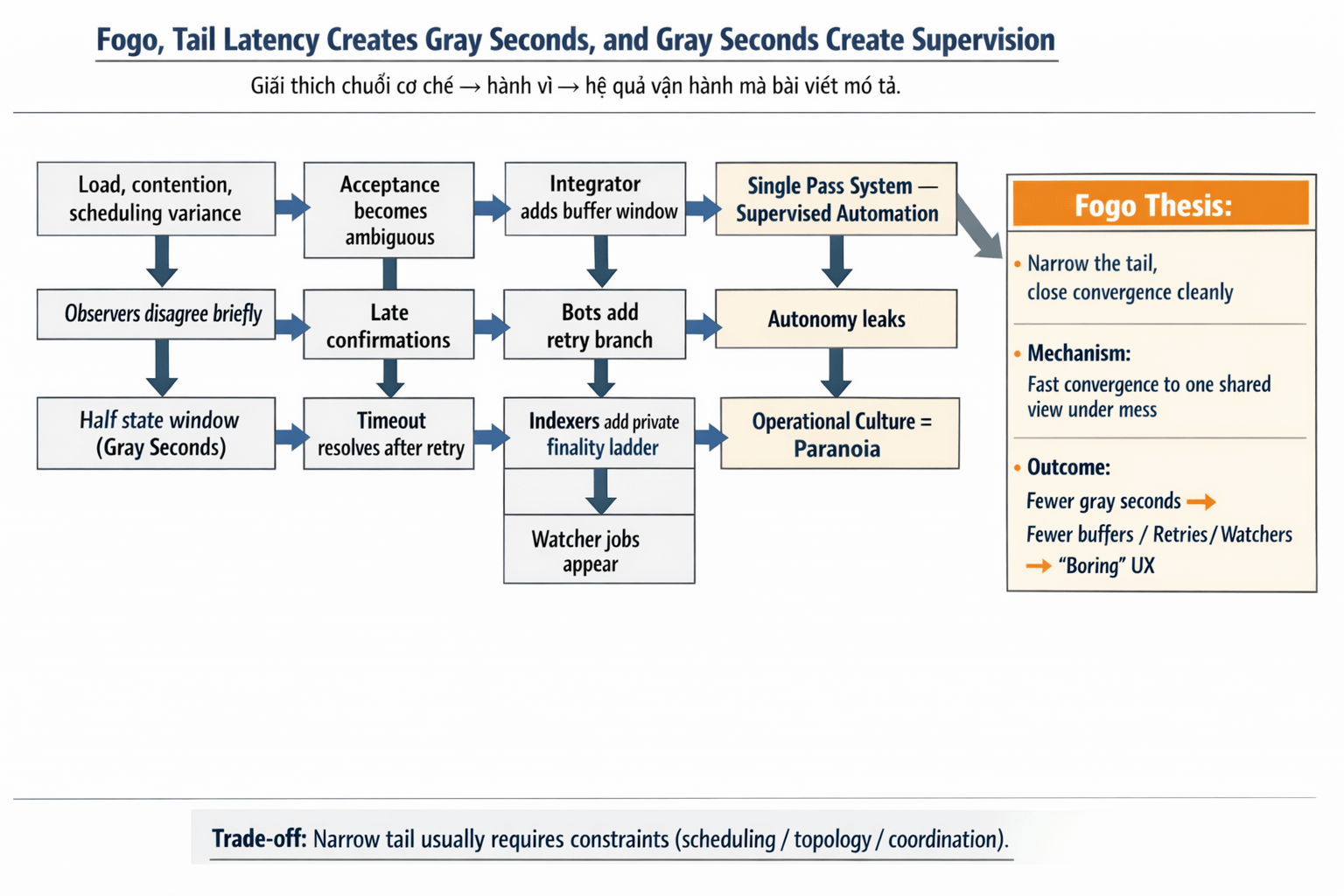

That is the part people do not like to talk about because it is not a headline. The worst operational pain is rarely a clean failure. It is the half state. The transaction that kind of succeeded. The confirmation that arrives late. The observer that disagrees briefly. The timeout that resolves after a retry. Nothing explodes. Nothing becomes a postmortem. But every integrator quietly adds a buffer. Every bot quietly adds a retry branch. Every workflow quietly adds a second layer of truth.

And then the product changes.

It stops being a single pass system. It becomes supervised automation. Someone is always deciding whether to wait, retry, reroute, or reconcile. That decision becomes the real interface. Not the chain. Not the app. The gray seconds.

Gray seconds are where autonomy leaks.

Tail latency is not only about the network being slow. It is about variance. It is about contention and scheduling and the way congestion reshapes outcomes. Under load, the system does not just get slower. It gets less predictable. And unpredictability is what forces workarounds to appear.

If you have ever built automation on top of settlement, you know the sequence. The first workaround looks harmless. A small extra delay so you do not trigger the next step too early. A watcher job that waits for enough agreement. A buffer window that smooths brief disagreement between observers. A retry policy that turns an exception into the default. Over time, the tail does not just create operational cost. It creates operational culture.

FOGO is interesting to me if it is trying to attack that culture at the source. Not by making the average faster, but by making the tail less disruptive. By shrinking the window where the ecosystem is forced to interpret what accepted actually means.

This is where I look for something FOGO specific. The design reads like it cares about cadence as a product surface, not just a benchmark. In other words, not only how fast blocks can be produced when things are clean, but how quickly the system converges to one shared view when things are messy.

That difference is the mechanism.

When convergence closes cleanly, downstream does not have to invent a private finality ladder. Indexers do not need special rules for what counts as stable. Integrators do not need to hold a buffer window just in case acceptance is still drifting. The stack stays single pass longer than you expect, not because nobody is using it, but because the system leaves fewer moments where observers can disagree long enough to matter.

Execution can be fast and still be operationally expensive.

The question is whether speed stays coherent when the system is stressed. Whether the tail remains narrow enough that workflows do not learn paranoia. Whether retries remain rare enough that bots do not treat uncertainty as a strategy. Whether downstream systems can share a single view of acceptance without inventing private policies that become permanent.

That is the human experience layer nobody markets well. When the tail is wide, users feel it as lag and uncertainty. Builders feel it as defensive code and permanent buffers. Operators feel it as constant triage. They do not call it latency. They call it babysitting.

The inversion that matters is simple. You can reduce average time and still increase total cost if the tail gets worse. Because the tail is where the coordination cost concentrates. The tail is where humans get pulled back into the loop.

So my frame for FOGO is not, can it go fast. It is, can it stay boring.

Boring means outcomes converge cleanly. Boring means fewer half states. Boring means fewer reasons to ask, do we know this is accepted, or did we just observe it. Boring means you can ship a workflow without adding a watcher, a buffer window, and a reconciliation job a month later because “success” stopped feeling binary.

And yes, there is a real trade off here, and it is the part people usually skip because it is harder to sell than speed.

Shrinking the tail usually means constraints. Constraints on scheduling. Constraints on topology. Constraints on how much variance the system is willing to tolerate. If the system tries to keep variance low under load, it often has to be more opinionated about how coordination happens. Builders who like pushing edges will feel that. Some forms of flexibility will become harder. Some forms of permissiveness will disappear.

Markets rarely reward that early because freedom is an easy narrative. Discipline is not.

But I have learned to treat the opposite as its own constraint. A system that feels flexible at the protocol layer can become brutally strict at the operational layer. It forces builders to carry uncertainty in their app. It forces integrators to build private buffers. It rewards the teams with the best routing, the best heuristics, the best infrastructure, the best babysitters.

That is not decentralization. That is decentralization of responsibility, into the place least suited to carry it.

Now the token, and I mention it late on purpose, because it only matters if this design choice is real.

If FOGO is serious about narrowing the tail and keeping acceptance coherent under load, then coherence has an operating cost. Someone must run the enforcement path. Someone must bear the cost of fast coordination when demand spikes and incentives become adversarial. If the token matters, it should be coupled to that role, as the operating capital that keeps participation disciplined, and keeps the system rewarded for staying boring under stress.

If the token is not coupled to that operational reality, then the value leaks elsewhere. It leaks into privileged infrastructure. It leaks into private deals. It leaks into the teams that can afford to build around a wide tail. The chain can still look fast. The human experience will still feel supervised.

So I do not want to end with certainty. I want to end with a test that I can apply later.

When FOGO is stressed, does the tail stay tame enough that teams do not add buffer windows. Do watcher jobs remain rare. Do retries remain exceptions instead of becoming the default. Does the stack stay single pass longer than you expect.

If it does, then the real product is not average speed. It is the removal of gray seconds. And that is the kind of performance that actually changes how infrastructure feels to humans.