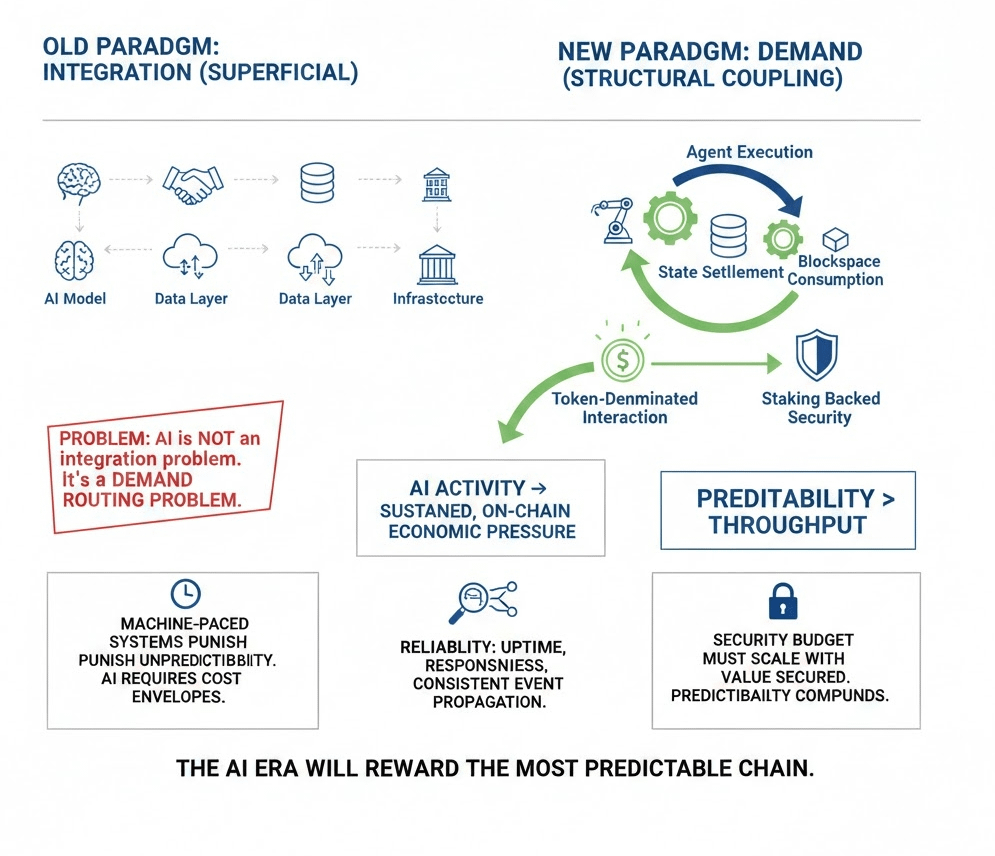

Most blockchain roadmaps frame AI as an integration milestone: add a model endpoint, announce a partnership, expose a data layer, and call it infrastructure. From an operator’s perspective, that framing is superficial. AI is not an integration problem. It is a demand routing problem.

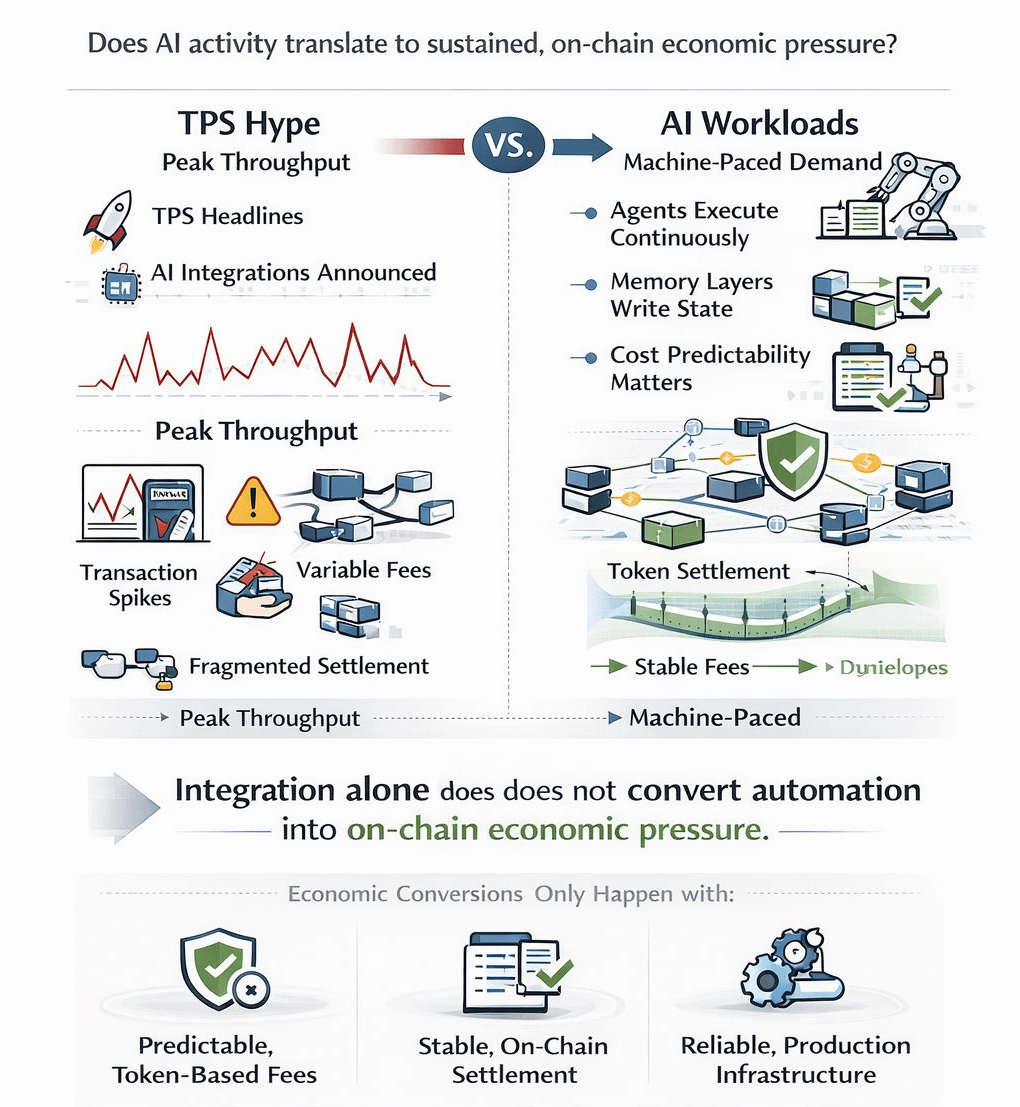

The dominant narrative assumes that if a chain can host AI workloads, value will naturally accrue. In production systems, infrastructure does not create demand by existing. It creates demand when usage forces recurring, unavoidable economic interaction. The real question for Vanar’s next phase is not whether it can technically support AI. It is whether AI activity converts into sustained, on-chain economic pressure.

Running AI systems teaches a simple lesson: automation magnifies variance. Agents execute continuously. Inference triggers transactions. Memory layers update context. Coordination loops settle state across components. Under these conditions, the system is no longer human paced. It is machine-paced. And machine paced systems punish unpredictability.

If a network cannot maintain a stable N block confirmation window during agent burst load, or if inference triggered traffic produces mempool repricing cascades, automated systems either stall or reroute. AI does not tolerate fee ambiguity. It requires cost envelopes that remain inside modeled bounds, even when concurrency spikes.

This is where the usual blockchain emphasis on peak throughput misses the point. AI does not fail because a chain’s TPS ceiling is modest. It fails because economic modeling becomes unstable under stress. If an agent cannot forecast settlement cost or timing with confidence, automation logic degrades. In distributed systems, uncertainty compounds faster than latency.

Converting AI infrastructure into economic demand therefore requires structural coupling. Agent execution, memory persistence, and state settlement must translate into predictable, token denominated interaction. Not through forced friction, but through embedded necessity. If AI usage settles off chain, batches through centralized sponsors, or abstracts fees away from the base layer, the network may appear busy while economic capture leaks elsewhere.

This is not a philosophical issue. It is an accounting issue. AI compute, storage, and inference are cost centers. If those costs are denominated externally while the chain remains a coordination surface, the token becomes peripheral. For AI to generate durable demand, meaningful activity must reinforce the economic core: blockspace consumption, staking backed security, and recurring settlement.

Reliability becomes more than a virtue in this environment; it becomes precondition. AI agents operate continuously and often concurrently. That stresses liveness assumptions. Node reachability, RPC latency stability under automated concurrency, and consistent event propagation matter more than vanity decentralization counts. A validator set that is large but operationally inconsistent introduces fragility. One that ties rewards to measurable service contribution uptime, responsiveness, participation quality signals production discipline.

Upgrade discipline follows the same logic. AI integrations introduce new state models, memory primitives, and contract surfaces. Each protocol adjustment becomes a potential semantic shift. In human paced systems, a minor execution nuance may be inconvenient. In agent driven systems, it can cascade. Upgrades must be staged, rollback aware, and backward compatible by default. Semantic stability across versions is not conservatism; it is survival.

There is also a deeper economic alignment question. If AI infrastructure increases transaction volume but does not reinforce staking demand or long term security incentives, the network may scale operationally without strengthening its defensive perimeter. In high value automated environments, the security budget must scale with the value secured. Otherwise, economic activity grows while resilience lags.

This is why converting AI into demand is not about feature velocity. It is about containment of leakage. Containment of fee variance. Containment of semantic drift. Containment of economic abstraction. The network must ensure that increased automation translates into increased settlement and security reinforcement, not into detached service layers.

From an operator’s lens, the signal to watch is simple: can autonomous systems run without recalibration? If agents can execute transactions during traffic bursts without triggering repricing cascades, if confirmation depth remains stable under concurrency, if RPC endpoints maintain latency consistency during inference spikes, then the infrastructure is behaving as a foundation rather than a prototype.

None of this produces dramatic announcements. It produces predictability. And predictability compounds.

Vanar’s next phase will not be judged by the number of AI integrations announced. It will be judged by whether those integrations generate steady, observable economic interaction tied to the base layer. If AI workloads consistently consume blockspace within predictable envelopes and reinforce staking backed security, infrastructure becomes demand. If not, AI remains a narrative layer sitting above unchanged economics.

The AI era will amplify the difference between experimentation and infrastructure. Autonomous systems do not care about slogans. They care about stable environments.

AI will not reward the fastest chain. It will reward the most predictable one.