The building is quiet in the way only office buildings can be quiet—too clean, too still, like it’s holding its breath. One person is awake. One chair pulled slightly off-center. One dashboard open on a wide monitor, the kind of dashboard everyone references and nobody fully believes. It has numbers that look familiar enough to lull you and sharp enough to wake you back up.

There’s a discrepancy. Small. Embarrassingly small. A few decimals that refuse to line up between what the chain says and what the internal ledger expects. It should be nothing. It never stays nothing. Because the moment money becomes payroll, contracts, clients, “close enough” becomes a liability. Close enough is how trust starts to fracture. Not dramatically. Quietly, first. Then all at once.

At 02:13 the incident report begins, because when you can’t sleep you either spiral or you write. Time. Scope. Symptoms. Systems touched. A line for “customer impact” that you hope will remain blank. A line for “root cause” that stays empty longer than it should.

The dashboard claims one balance. The finance sheet claims another. The difference is so small you almost want to apologize for noticing it. But that apology is exactly what gets teams hurt later. Production doesn’t care that the mistake is tiny. Production only cares that it is real.

So you do the first check: on-chain state versus off-chain read. Then the second: which system is authoritative for which decision. Then the third, the one that makes you look away from the screen for a second—who is authorized to fix it, and how, and with what proof that the fix wasn’t just another mistake.

That is where migration starts. Not in a roadmap document, not in a pitch, not in a tweet. In the boring place where it’s just you and the numbers and the knowledge that “transparency” is not a strategy.

Somewhere in the company’s history there was a phase where slogans were comforting. “Everything on-chain.” “Public by default.” They sounded clean, almost moral. But once money becomes payroll, contracts, clients, you learn the hard truth: slogans fail at the exact moment you need them most. Not because transparency is bad. Because “transparent” is not a control. It’s a setting. And settings without boundaries become accidents.

Privacy, in the adult world, is often a legal duty. Not a vibe. Not a preference. A duty. Auditability is non-negotiable. Not because it’s fashionable, but because a serious system must be accountable to someone other than its own optimism.

And then you hit the sentence you wish more people wrote down earlier: public is not the same thing as provable.

The dashboard is public. It’s visible. It’s loud. But it’s not always provable in the way that matters. “Public” can mean everyone sees it. “Provable” means you can defend it. In a room with auditors. In a room with regulators. In a room where someone signs under risk and the signature has consequences.

This is why I keep returning to the audit-room image of a sealed folder.

In a real audit, you don’t throw every internal document into the street and call it honesty. You produce what is relevant, under rules, to authorized parties, with an evidentiary trail. The folder is sealed not to hide wrongdoing, but to prevent collateral harm: client positioning turning into competitor intel, salaries becoming leverage, trading intent turning into market conduct, negotiations being distorted by outsiders who don’t carry the same obligations.

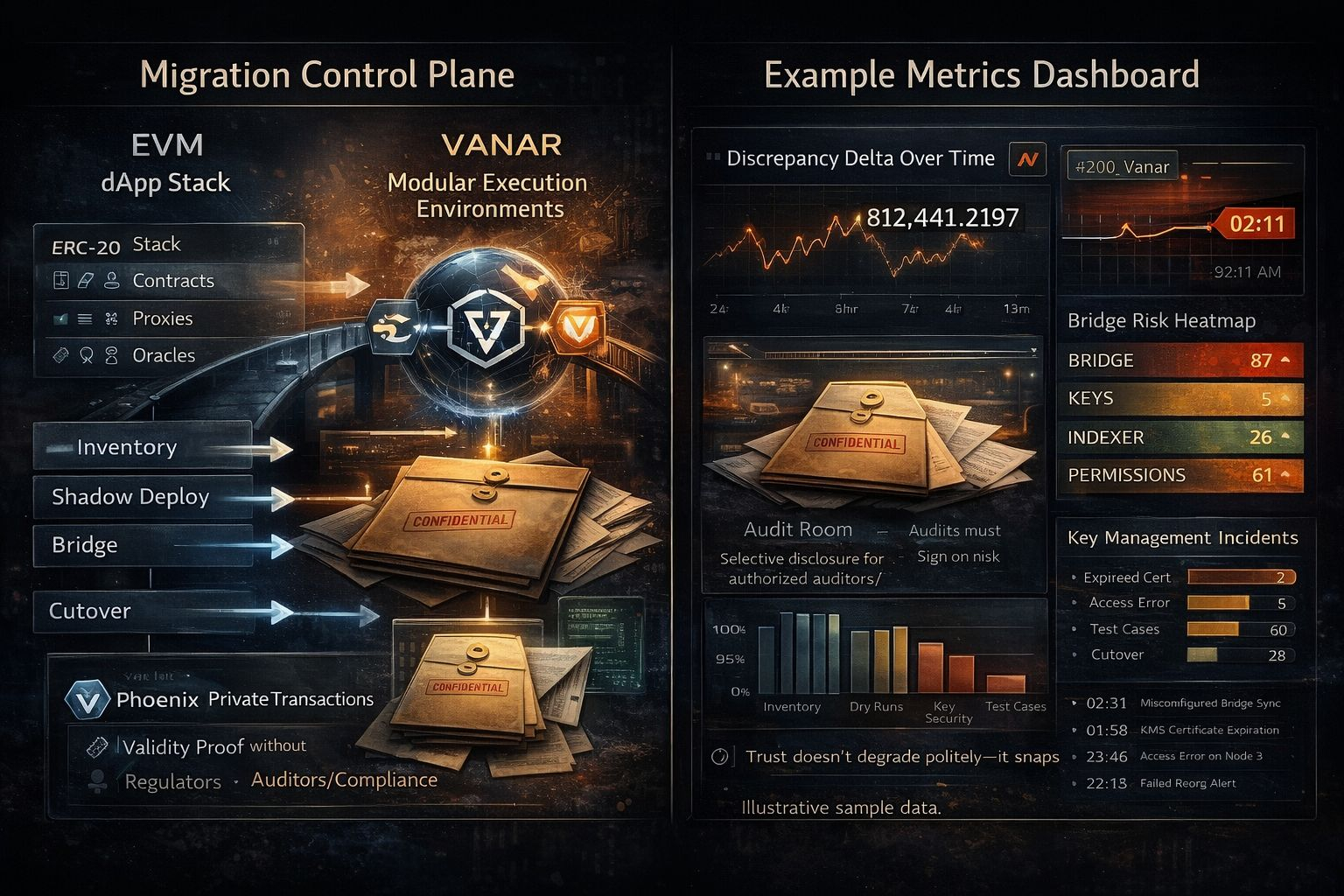

That sealed-folder idea should exist at the protocol level too. Selective disclosure for authorized parties—auditors, regulators, compliance—without dumping everything publicly. A way to say: this was valid, this was compliant, this followed the rules, and here is the proof—without forcing the world to watch every sensitive detail like it’s entertainment.

That’s where Phoenix private transactions fit, if you talk about them like an operator and not like a salesman.

Confidentiality with enforcement. That phrase is heavy on purpose. It means transactions can be private while still being constrained. Validity proofs without leaking details. Proof that the rules were followed, even when the specifics must stay sealed. Not “trust me.” Not “look away.” Proof. With boundaries.

And boundaries matter because indiscriminate transparency can cause real harm even when nobody is “doing anything wrong.” If a client’s flow reveals their strategy, you’ve harmed them. If salaries become public gossip, you’ve harmed your team. If treasury movements reveal trading intent, you’ve harmed your execution and invited bad actors to play games. If deal terms become globally readable, you’ve harmed negotiations and, sometimes, compliance itself.

Transparency without selectivity can turn into surveillance. And surveillance rarely stays neutral.

By 02:27 the discrepancy still isn’t resolved, but the mind has shifted into the deeper question: if we migrate, what are we migrating into?

Vanar, built for real-world adoption, feels like it was designed by people who have seen mainstream constraints up close—games, entertainment, brands. Those worlds teach you something early: you can’t ship systems that require your users to become ideological purists. You can’t ask normal people to live inside slogans. You need the chain to make sense when the user is tired, distracted, and just trying to do the thing they came to do.

So the architecture reads like something meant to survive real life: modular execution environments over a conservative settlement layer. A settlement layer that is supposed to be boring and dependable. That matters more than it sounds. Boring settlement is the part you can build your obligations on. The part you can tell your legal team about without watching their face change. The part you can rely on at 02:11 when a small discrepancy threatens sleep.

Separation is containment. Containment is safety. If an execution environment has a problem, you don’t want it to poison the entire system. You want the blast radius to be a design choice, not a surprise.

Now, EVM compatibility.

People talk about compatibility as if it’s convenience. For the person staring at a dashboard in the middle of the night, compatibility is fewer surprises. It’s familiar semantics. Familiar tools. Familiar failure modes. It’s a smaller cognitive migration, which is the kind you can actually manage.

Because migration isn’t “deploy contracts again.” Migration is moving assumptions. Moving trust. Moving responsibility.

The playbook, if you write it honestly, starts with inventory and ends with controls.

You inventory every contract. Every proxy. Every upgrade path. Every role. Every signer. Every oracle. Every external service that quietly holds your system together. The glue code, the indexers, the data pipelines, the admin panels, the “temporary” scripts that became permanent. You don’t judge. You list. Production doesn’t care that you intended to clean it up later.

Then you define what must remain true.

Balances must match. Permissions must be explicit. Upgrades must be predictable. Failures must be containable. The system must be auditable. Sensitive flows must be protectable. Recovery must exist. Revocation must exist. The ability to stop harm must exist, because humans are part of the system and humans are not perfect.

This is where $VANRY enters—not as price talk, not as charts, not as a promise. As responsibility.

If the chain’s economics involve staking, you treat staking like a bond. A bond is accountability. A bond is the cost of being wrong. A bond is a way to align behavior with consequences. In the adult world, that’s what matters. Not excitement. Not hype. Consequence.

And then you hit the sharp edges. The ones that cut teams.

Bridges and token migrations are never “just a bridge.” They are concentrated risk.

If you’re moving representations—ERC-20 or BEP-20 style tokens—into a native environment, you are translating meaning: canonical supply, mint/burn authority, redemption rules, address mapping, event indexing, wallets, exchanges, and the human expectation that “my balance is my balance.” One wrong mapping can make two tokens look like one. One missed confirmation step can turn a reversible support ticket into an unrecoverable loss.

Key management is not a detail. It’s the system.

Humans forget. Humans rush. Humans assume. Humans copy-paste the wrong address with full confidence. The world outside doesn’t forgive that. The chain doesn’t either. Trust doesn’t degrade politely—it snaps.

So you harden the migration like you would harden an incident response plan.

You rehearse on test environments with production-like roles. You replay historical traffic to see how indexers behave under stress. You measure finality assumptions. You simulate outages. You plan rollback even if rollback is just “stop and contain.” You document who can push what, who can pause what, who can sign what, and how you prove afterward that they did it correctly.

And when you adopt private transactions, you don’t do it as decoration. You do it because real business constraints require sealed folders. Because “public” is not the same thing as “provable.” Because you need confidentiality with enforcement: validity proofs without leaking details.

At 02:41 the discrepancy finally resolves.

It’s a precision mismatch in an adapter—one part assuming 18 decimals everywhere, another respecting a different precision at the boundary. Small. Fixable. The chain was consistent. The glue wasn’t. The kind of thing you only notice when you stare long enough and refuse to accept “close enough” as a bedtime story.

It gets patched. A regression test is added. A note goes into the post-incident write-up that will sound paranoid to anyone who hasn’t been here: enforce precision through typed units; never assume decimals across systems.

The room stays quiet. There is no victory lap. There is only the relief of returning to boring.

And that’s the real point, the part the report accidentally turns into philosophy.

Boring is not the enemy. Boring is the goal.

A dependable settlement layer should be boring. Controls should be boring. Permissions should be boring. Audit trails should be boring. Revocation and recovery should be boring. The system should not depend on everyone being brilliant all the time. It should depend on checklists, containment, and proofs.

Because the adult world is made of obligations.

Compliance obligations. Contractual obligations. Obligations to employees who trust that payroll will arrive. Obligations to clients who trust that confidentiality won’t be sacrificed to ideology. Obligations to auditors and regulators who need proof, not performance.

In the end, everything collapses into two rooms that matter.

The audit room, where the sealed folder is how you stay accountable without becoming reckless—selective disclosure for authorized parties, proofs without leakage, “public” separated from “provable.”

And the other room, quieter, heavier, where someone signs under risk. Where permissions and controls aren’t theoretical. Where revocation and recovery aren’t optional. Where the question isn’t whether the chain is exciting, but whether it is governable, explainable, defensible.

That’s the migration playbook if you strip it down until it stops trying to impress you.

Make settlement boring and dependable. Keep execution modular so problems are contained. Use EVM compatibility to reduce surprises. Treat $VANRY staking as a bond—accountability, not spectacle. Use Phoenix private transactions as confidentiality with enforcement: validity proofs without leaking details. Design for selective disclosure like a sealed folder. Respect the sharp edges—bridges, key management, human error—because that’s where systems bleed.

Then you close the laptop.

Not because everything is perfect.

Because you’ve built something that can survive the imperfect people who have to run it.