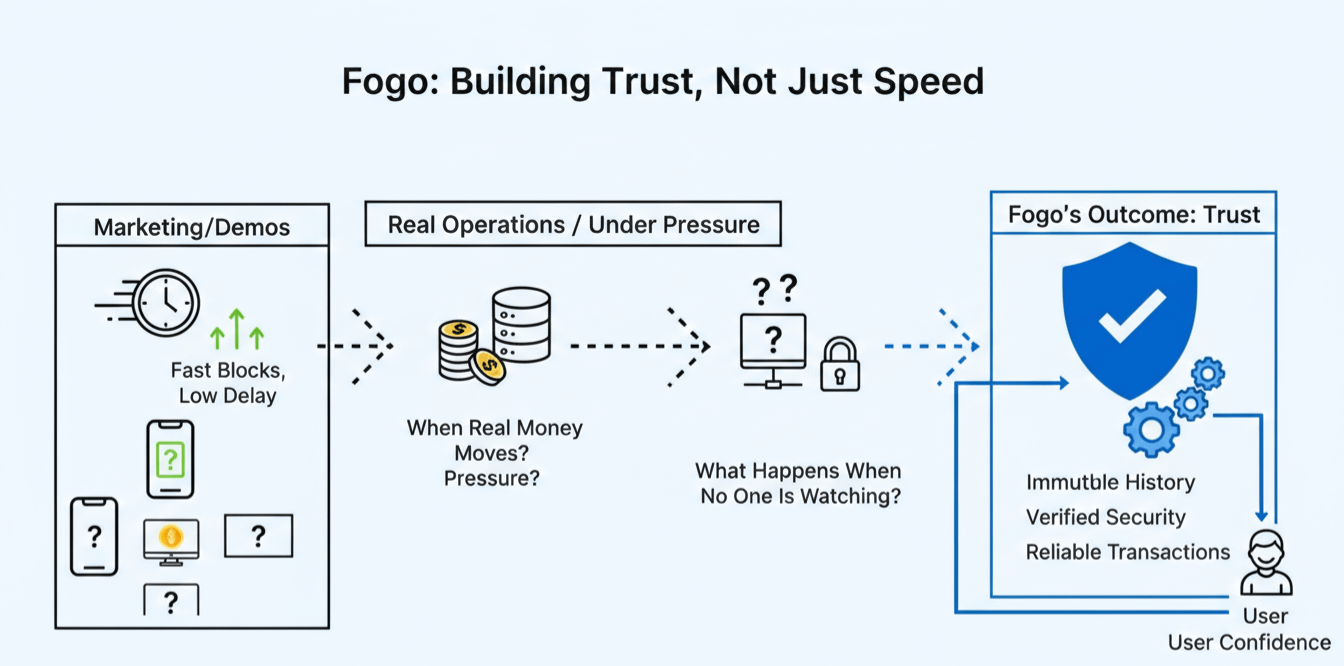

Most people meet a blockchain through an app, a token chart, or a single transaction that either lands instantly or gets stuck long enough to feel embarrassing. But the real story, the one that decides whether adoption lasts, usually sits lower than the parts we talk about. Infrastructure is the deciding layer because it is where performance becomes habit. It is where reliability turns into trust. A smooth user experience is not a feature you bolt on later. It is the consequence of how execution, networking, and validation behave when the system is under real pressure, not when it is being demoed.

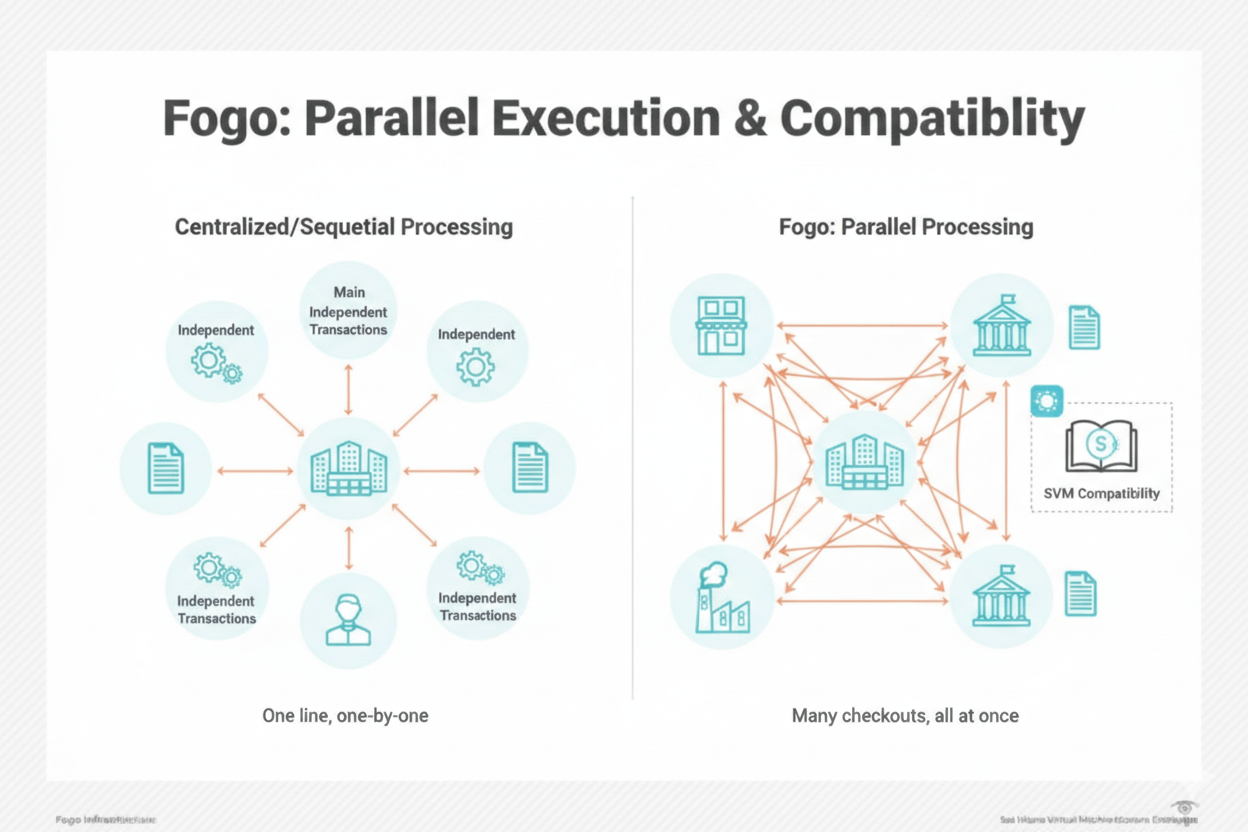

Fogo is a high performance Layer 1 built on the Solana Virtual Machine. That choice matters because the SVM execution model is designed for parallelism. In plain terms, it tries to do many things at the same time instead of forcing everything through a single narrow line. When transactions touch different pieces of state that do not conflict, they can be processed in parallel rather than queued behind one another. This is not just about speed for its own sake. It is about keeping latency low and throughput high while staying consistent, even when demand spikes and the mempool would normally turn into a traffic jam.

Low latency changes the feel of on-chain activity. It reduces the gap between intent and outcome. If you submit an order, you want it to land where you expected, not after the market has moved and the price is now a memory. High throughput changes what is possible. It allows a network to support dense, continuous activity without forcing users into bidding wars for block space. Parallel processing is what connects those dots. It can make execution less fragile under load because the system is not constantly choking on unrelated transactions that could have been handled side by side.

This is where the conversation becomes practical. On-chain order books are unforgiving. They are not like occasional swaps where a few seconds of delay is annoying but survivable. An order book is a living structure. Quotes update constantly, fills happen in tight sequences, and liquidity disappears the moment it becomes stale. If execution is unpredictable, market makers widen spreads or leave. If finality is slow, traders hesitate or move off-chain. If congestion spikes randomly, the entire experience becomes a guessing game. A chain that can keep latency low and performance steady makes on-chain order books less of a novelty and more of a serious venue.

High frequency trading on-chain has similar requirements, even if the phrase itself can sound intimidating. What it really means is repeated, rapid interaction with the system: placing, canceling, updating, hedging, and routing across venues. This kind of activity exposes every inconsistency. When block times drift, when execution ordering becomes chaotic under load, or when fees jump without warning, strategies that rely on tight timing break down. Traders adapt by slowing down, reducing size, or shifting volume elsewhere. The result is thinner markets and worse prices for everyone. Predictable execution is not a luxury here. It is the base condition for fair competition and stable liquidity.

Real time liquidity routing depends on the same foundation. If a router is trying to find the best path across pools, it needs fresh information and a high confidence that its transaction will not arrive late. Otherwise routing becomes reactive instead of proactive, chasing opportunities that have already vanished. When latency is consistently low and congestion is controlled, routers can make decisions that remain valid when they hit the chain. That improves settlement quality, reduces failed transactions, and lowers hidden costs that users often mistake for “slippage” when it is really timing risk.

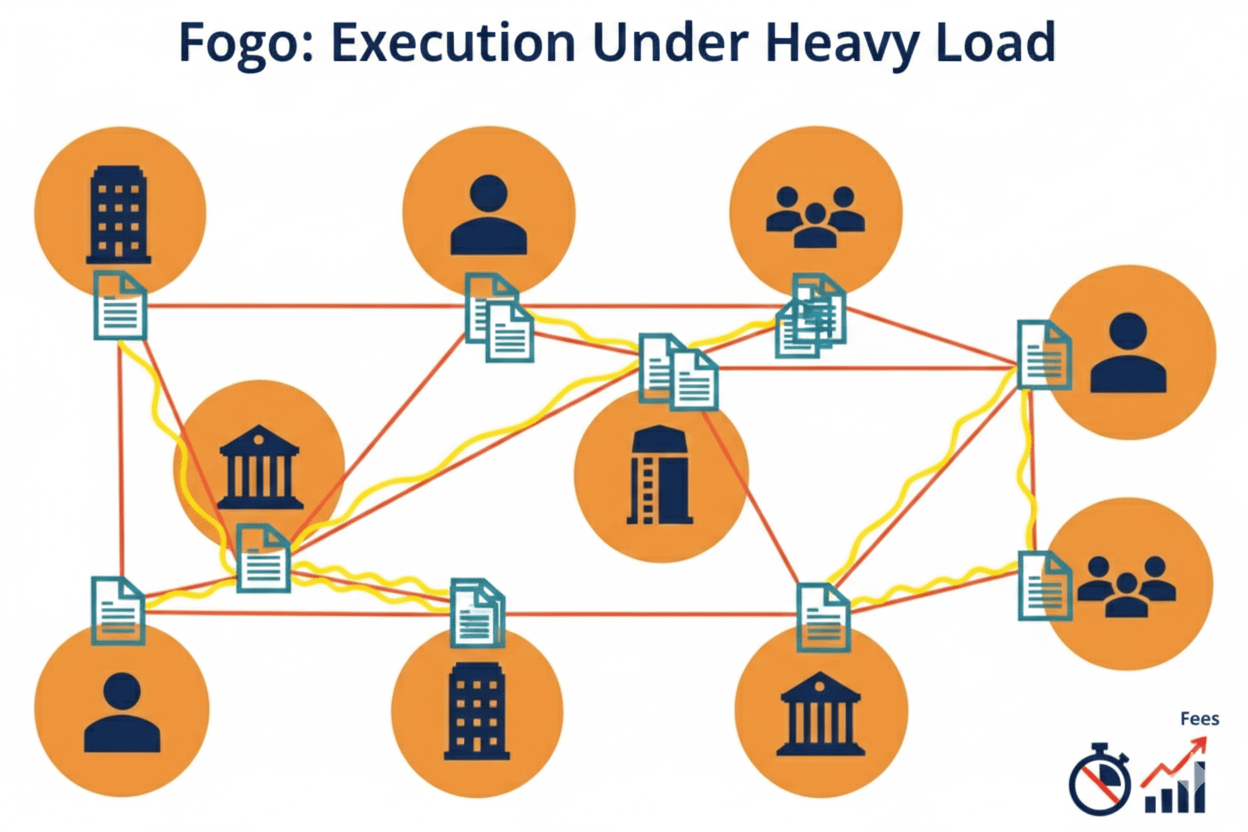

Scalable DeFi settlement is where these mechanics compound. DeFi is not only about trading. It is also about collateral updates, liquidations, rebalancing, interest rate adjustments, and the quiet bookkeeping that keeps protocols solvent. Under heavy market movement, settlement load explodes. If a chain slows down right when the system most needs to be responsive, risk increases. Liquidations arrive late, bad debt grows, and confidence cracks. A high performance execution environment matters because it can process more state updates without turning into a bottleneck. Just as importantly, it can do so in a way that is consistent, so protocols can design risk parameters around behavior that does not change dramatically from one day to the next.

There is another angle that is easy to miss: the kinds of applications teams dare to build when the base layer behaves like a dependable machine. When execution is fast but unpredictable, developers end up designing around worst cases, adding buffers, delays, and extra confirmations. The product becomes slower than it needs to be because it is built on caution. When execution is predictably fast, design becomes more direct. You can build flows that feel closer to real time, because they actually are.

This is especially clear with AI integrated dApps, where the value often comes from reacting quickly to changing conditions. People imagine AI in crypto as a flashy assistant, but the more serious use is decision automation driven by live data: risk engines that adjust exposure, vaults that rebalance based on market microstructure, agents that route liquidity or manage positions across protocols, and monitoring systems that act the moment a threshold is crossed. None of this works well if the chain is frequently congested or if transaction inclusion is uncertain. Real time data processing has to meet real time execution. When the base layer stays responsive under load, smart contracts can react while the signal is still meaningful. The result is less lag, fewer wasted transactions, and automation that feels less like a batch job and more like a live system.

AI driven applications also tend to create bursts of activity. They do not always act smoothly. They may respond to events, volatility, or sudden changes in liquidity. That can lead to synchronized transaction spikes from many agents at once. In weaker environments, these bursts cause congestion, then congestion changes outcomes, then agents adjust again, and the system enters a feedback loop. In a stronger environment, th chain absorbs the burst with fewer surprises. That stability makes automation safer, because the difference between a good decision and a bad one is often just whether execution arrives on time.

GameFi shows the same principle in a different outfit. Games are not patient. Players do not think in block times. They think in moments. When a match ends, rewards should settle quickly. When an item is transferred, it should feel immediate. When a player crafts, upgrades, or trades, they expect continuity, not a loading screen that lasts long enough to break immersion. Rapid state updates matter because game worlds are state heavy. They involve inventories, positions, cooldowns, and a constant stream of small actions. If each of those actions competes for scarce throughput, the game becomes expensive or sluggish, and either outcome pushes players away.

Smooth asset transfers are only part of it. Peak load stability is the real test. A game can look fine during quiet hours and still fail at the moment of a tournament, a mint, or a major update when everyone shows up at once. If the network collapses into congestion, players experience delays, failed actions, or inconsistent outcomes, and they do not interpret that as “network conditions.” They interpret it as the game being broken. Reliability under heavy demand is what keeps a GameFi economy honest. It prevents situations where only the most aggressive users, or the ones willing to overpay, can participate during peak moments.

Across trading, DeFi settlement, AI driven automation, and games, the shared requirement is not just raw speed. It is consistency. It is the confidence that the chain will behave tomorrow the way it behaved today, even when activity doubles. Predictable execution is what allows builders to set expectations and users to form habits. Reliability is what allows the network to carry serious value without turning every market event into a stress test. This is also where the conversation about decentralization becomes more mature. A chain can have a large number of participants and still be unreliable, and an unreliable system does not serve the people using it. Sustainable adoption is not a slogan. It is a systems problem.

None of this requires inflated language. It requires engineering that holds up under pressure and a design that treats congestion as a core issue, not an occasional inconvenience. If you look at @Fogo Official and the way people talk about $FOGO, the most interesting part is not hype. It is the implied bet that infrastructure can be built to stay steady when it matters, and that this steadiness will unlock applications that would otherwise remain theoretical.

In the end, the infrastructure layer is the quiet judge of every promise made above it. Applications can be clever, interfaces can be beautiful, and tokens can attract attention, but the base layer decides whether those things remain usable when real demand arrives. When execution is parallel, latency stays low, throughput remains high, and behavior stays predictable under heavy load, builders stop fighting the chain and start building on it. That is why infrastructure, more than any single app, quietly determines whether everything above it can truly scale.